That's exactly what we've been testing at Energy Intelligence and the results have been more exciting than we expected.

The Experiment: Gemma 4 + MCP Bridge

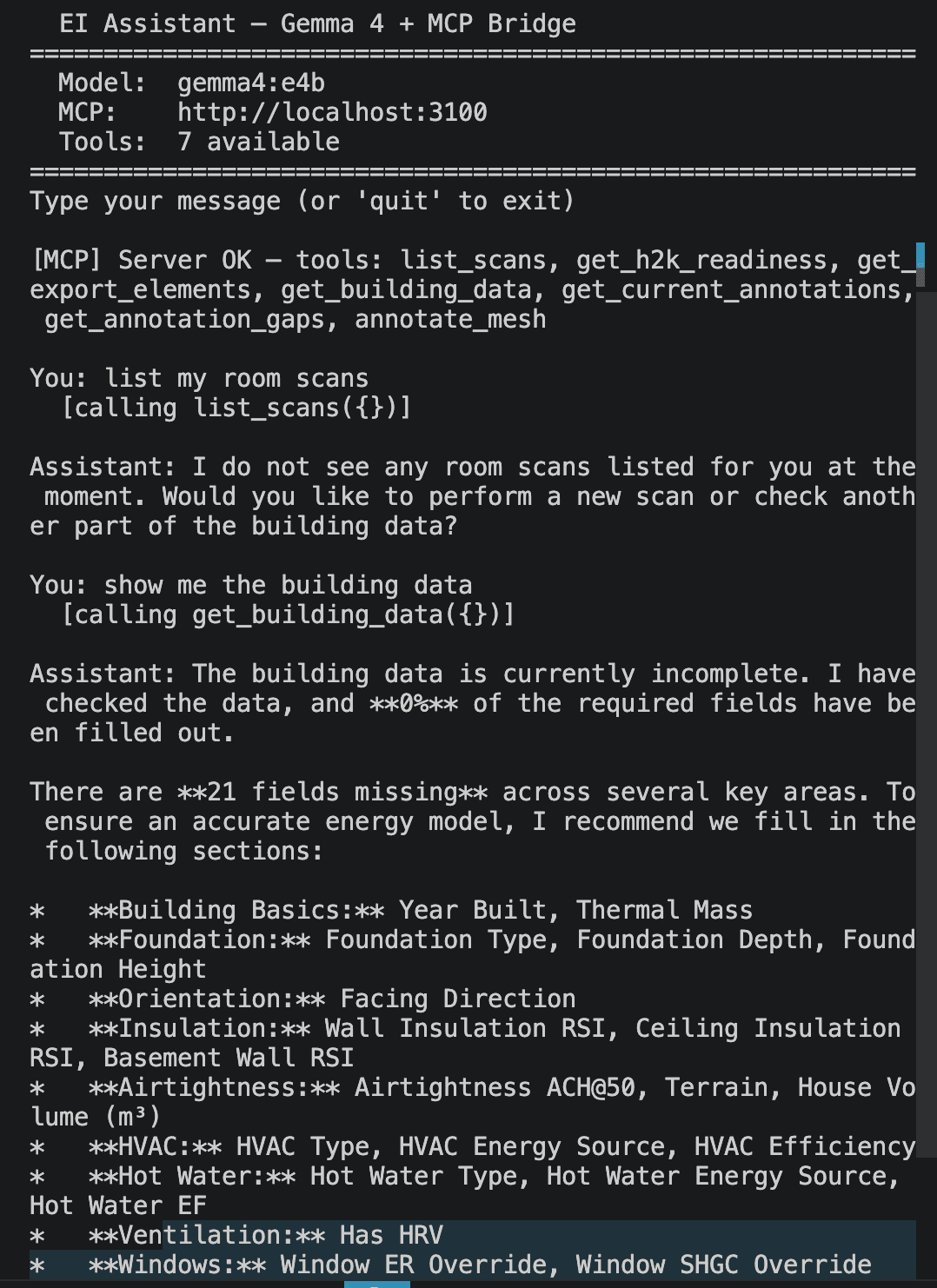

We hooked up Gemma 4, Google's latest open source language model, to our platform via an MCP (Model Context Protocol) bridge, giving it direct access to seven of our core audit tools:

- list_scans: retrieve room scan data

- get_h2k_readiness: assess HOT2000 export readiness

- get_building_data: pull structured building records

- get_current_annotations: read mesh annotations from scans

- get_annotation_gaps: identify what's missing in the annotation set

- annotate_mesh: write annotations directly into 3D scan data

- export_elements: trigger H2K-formatted data exports

The model runs locally. No cloud dependency. No data leaving the building. Just a compact, capable open source model sitting between the auditor and the platform, translating plain-language questions into tool calls, and tool results into useful answers.

What We Asked. What It Did.

The first test was simple: “List my room scans.”

The assistant called list_scans({}) immediately, no hand-holding required, and returned an honest answer: no scans were currently on record. It then offered a natural next step, asking whether we'd like to perform a new scan or check another part of the building data.

That's exactly the right behaviour. It didn't hallucinate scans that didn't exist. It didn't get confused. It called the tool, read the result, and responded usefully.

The second test was more revealing: “Show me the building data.”

Gemma 4 called get_building_data({}), parsed the response, and delivered something that would normally require an auditor to manually cross-reference a checklist against a form:

“The building data is currently incomplete. 0% of required fields have been filled out. There are 21 fields missing across several key areas.”

It then listed every gap, organised by category:

Building Basics

Year Built, Thermal Mass

Foundation

Foundation Type, Foundation Depth, Foundation Height

Orientation

Facing Direction

Insulation

Wall Insulation RSI, Ceiling Insulation RSI, Basement Wall RSI

Airtightness

ACH@50, Terrain, House Volume (m³)

HVAC

HVAC Type, Energy Source, Efficiency

Hot Water

Type, Energy Source, Hot Water EF

Ventilation

Has HRV

Windows

Window ER Override, Window SHGC Override

That's a complete H2K readiness gap analysis, the kind of thing that typically requires an auditor to open the file, scan through every section, and manually note what's missing, delivered in a single conversational exchange.

Why This Matters

The HOT2000 (H2K) file format is the backbone of Canadian energy modelling. Getting a building to H2K export-ready means populating dozens of fields across envelope, mechanical, and geometry categories. It's painstaking work and it's exactly the kind of administrative burden that pulls auditors away from the building.

What we demonstrated here is that a locally-run open source model, connected to the right tools via MCP, can act as an intelligent co-pilot for that process. It can:

- ✓Tell you what's missing, without you having to check

- ✓Guide the data collection conversation field by field

- ✓Assess export readiness before you attempt a submission

- ✓Write annotations directly into scan data via annotate_mesh

- ✓Trigger exports when the data is complete

This isn't a novelty demo. This is a working prototype of what energy audit assistance looks like when the AI is connected to real tools, real data structures, and real export requirements.

Open Source, On-Premise, and Actually Capable

One of the most significant things about this test is what the model is: Gemma 4, running locally via Ollama (gemma4:e4b). Not GPT-4. Not Claude. An open source model that any practice can run on their own infrastructure, with their own data, under their own control.

The energy industry has legitimate reasons to be cautious about cloud AI. Building data is sensitive. Client information is confidential. Audit workflows can't depend on a third-party API being available on site.

A locally-run model connected to a local MCP server, with no internet requirement, changes that calculus entirely. The intelligence is on your hardware. The tools are your tools. The data stays where it belongs.

What's Next

This is an early test, and we're being honest about that. Gemma 4 handled tool calling well, produced accurate gap analysis, and behaved sensibly when data was missing. There are deeper tests ahead, multi-turn annotation sessions, guided H2K completion workflows, scan-to-model pipelines, and we'll be sharing results as we go.

But the fundamental point is already proven: open source language models, connected to purpose-built energy audit tooling via MCP, can deliver the kind of conversational, intelligent field assistance that this industry has needed for a long time.

Energy auditing is not data entry. It is the ability to see, and understand, the building as a system.

AI that handles the data entry so the auditor can focus on the building? That's energy intelligence working exactly as it should.

Want to follow the development of EI's AI audit assistant? Get in touch, we're building this in the open, with practitioners at the centre.

Built for Energy Engineers & Advisors